Running an AI project without structure is like building a house without a blueprint. Things may go well at first, but soon everything unravels. That’s why AI project governance is a top tech topic. Organizations are racing to deploy AI, making clear governance more urgent than ever. Effective governance keeps projects on track, protects stakeholders, ensures ethical use of AI, and supports long-term success. It also helps teams make better decisions at every stage.

What Is AI Project Governance

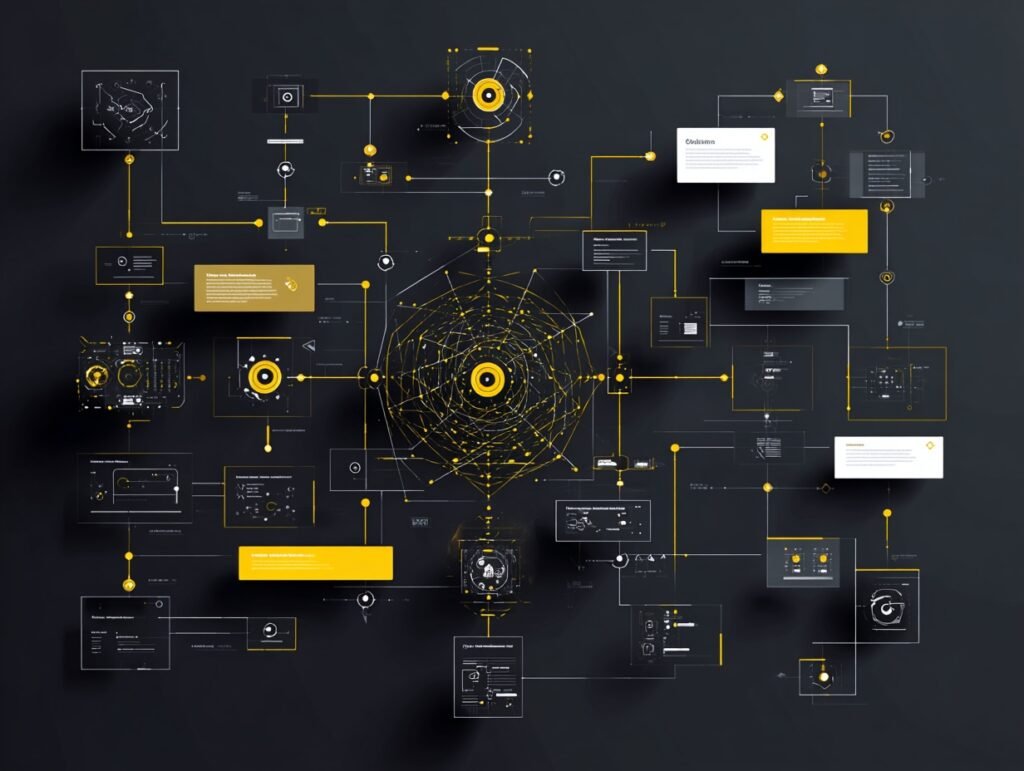

AI project governance refers to the set of policies, processes, and standards that guide the development, deployment, and monitoring of AI systems. Think of it as the rulebook for your AI team. Without it, projects can quickly drift off course. Moreover, decisions get made inconsistently, and accountability becomes murky.

Governance isn’t just compliance—it builds trust. When stakeholders see clear decision-making, they feel confident in outcomes. A strong structure also catches problems early, saving time and money.

According to Mäntymäki et al. (2022), organizational AI governance encompasses the mechanisms that ensure AI is developed and used responsibly within an organization. Governance forms the backbone of any serious AI initiative, helping technically impressive projects avoid serious trouble.

Why AI Governance Matters More Than Ever

The AI landscape is changing fast. New models, tools, and regulations are constantly emerging. Organizations without governance risk face regulatory penalties, reputational damage, and avoidable project failures.

The stakes are high—AI systems affect hiring decisions, loan approvals, medical diagnoses, and more. Without proper oversight, biased or flawed AI can cause real harm. Organizations cannot afford to take that risk lightly.

The OECD (2023) has outlined a framework emphasizing that AI systems must be transparent, robust, and accountable. These principles are not just ideals. They are increasingly becoming legal requirements in many regions around the world. So governance is not optional. It is essential for any organization that is serious about AI.

Building a Strong AI Project Governance Framework

Start with clarity. Define what your organization expects from AI projects. Set clear goals, ethical standards, and success metrics from the start.

Clarify responsibilities. Define roles clearly so decisions don’t fall through the cracks. Lacking accountability makes mistakes harder to fix.

After that, you need to thoroughly document your processes. Good documentation is the foundation of any governance framework. It creates a shared understanding across the entire team. It also makes audits and reviews much easier to conduct. Shneiderman (2022) argues that human-centered AI design requires structured governance to ensure that systems remain aligned with human values throughout their lifecycle. That is a point worth taking seriously as your organization grows its AI capabilities.

Roles and Responsibilities in AI Governance

Governance works when roles are clear. Key players include the project sponsor for strategy, the project manager for coordination, and data scientists, engineers, and ethicists who build and evaluate the system.

Governance needs an oversight committee or review board. This group approves major decisions, reviews risks, and ensures alignment with organizational values. Without oversight, teams may make conflicting choices.

Cross-functional collaboration is key. AI projects span many areas, so governance must involve legal, compliance, IT, HR, and business units. When all stakeholders collaborate, decisions improve.

Risk Management Within AI Project Governance

Every AI project carries risk—technical, ethical, and regulatory. Risk management must be central to governance.

Risk management begins with identifying possible issues before they arise. Assess risks for likelihood and impact. Then, use mitigation strategies and review them regularly.

Compliance is tied to risk management. Regulations like the EU AI Act set standards for AI governance. Cath (2018) notes that governance must evolve alongside ethical and legal standards. Governance is ongoing, not a one-time task.

Monitoring and Evaluation After Deployment

After deployment, governance continues. Monitoring checks system performance. Evaluation ensures original goals are met. Together, these support ongoing improvement.

Monitor technical metrics and fairness indicators. Is the model accurate? Is it biased? Does it perform consistently for all users? Regularly ask these questions throughout the system’s life.

Furthermore, AI project governance should include a structured feedback loop. Teams should regularly gather input from end users and affected communities. That feedback should then inform updates to the system and the governance framework. According to the OECD (2023), accountability mechanisms must include regular audits and transparent reporting to maintain public trust over the long term.

Making AI Project Governance Work Long-Term

Sustaining a governance framework over time takes real effort. Organizations often start strong, only to let things slide as new priorities emerge. Therefore, it is important to embed governance into the organizational culture, not just the formal process.

Leadership is crucial. Visible executive support makes teams take governance seriously. When governance links to reviews and approvals, it becomes routine.

Training is essential. Everyone on an AI project must understand the governance framework and their role within it. Training should be ongoing to keep pace with changes. Floridi et al. (2021) emphasize that ongoing education and stakeholder engagement are key to sustaining ethical AI.

Good governance doesn’t slow work. It builds trust, reduces risk, and helps create AI systems that deliver lasting value—a goal worth pursuing.

References

Cath, C. (2018). Governing artificial intelligence: Ethical, legal and technical opportunities and challenges. Philosophical Transactions of the Royal Society A, 376(2133). https://doi.org/10.1098/rsta.2018.0080

Floridi, L., Cowls, J., King, T. C., & Taddeo, M. (2021). How to design AI for social good: Seven essential factors. Science and Engineering Ethics, 26(3), 1771–1796. https://doi.org/10.1007/s11948-020-00213-5

Mäntymäki, M., Minkkinen, M., Birkstedt, T., & Viljanen, M. (2022). Defining organizational AI governance. AI and Society, 37(4), 1753–1768. https://doi.org/10.1007/s00146-022-01480-1

OECD. (2023). OECD framework for the classification of AI systems. Organisation for Economic Co-operation and Development. https://doi.org/10.1787/cb6d9eca-en

Shneiderman, B. (2022). Human-centered AI. Oxford University Press. https://global.oup.com/academic/product/human-centered-ai-9780192845290