Companies are rapidly automating decisions with AI in finance, HR, customer service, and operations. But moving fast without oversight creates risk. That’s why many organizations are investing in a formal AI governance board structure. This framework helps manage AI responsibly, creates accountability, reduces harmful bias, and builds trust with customers and regulators. Without it, costly mistakes can occur.

AI touches every part of the enterprise, from automated loan approvals to resume screening. The stakes are high, so governance must be a cross-functional, executive-level discussion—not just an IT issue.

Furthermore, regulatory pressure is growing fast. The EU AI Act is prompting businesses to carefully consider risk classification. In the U.S., the NIST AI Risk Management Framework has become a foundational reference point for organizations building responsible governance programs (Tabassi, 2023). So governance isn’t optional anymore. It’s a legal and competitive necessity.

Why Enterprises Need an AI Governance Board Structure

Building a governance board starts with recognizing that AI decisions have real-world consequences. They affect hiring, lending, healthcare, and more. Because of that, someone needs to be accountable. Research makes clear that this accountability needs to be embedded at the organizational level. That means putting the right people in the room. It means giving them real authority. And it means making sure those people represent diverse perspectives.

Additionally, there’s the issue of trust. Customers and stakeholders are growing more skeptical of AI. They want to know that companies are using it thoughtfully. A well-designed governance structure signals that the company takes those concerns seriously. Moreover, it creates internal consistency. Without one, different teams may approach AI ethics in completely different ways. That leads to inconsistent product and service offerings.

Therefore, setting up a governance board isn’t just about risk management. It’s about building a culture of responsible innovation. A 2025 MIT study found that organizations with digitally and AI-savvy boards outperform industry peers by nearly 11 percentage points in return on equity. Organizations that invest in governance early will be better positioned as regulations continue to tighten.

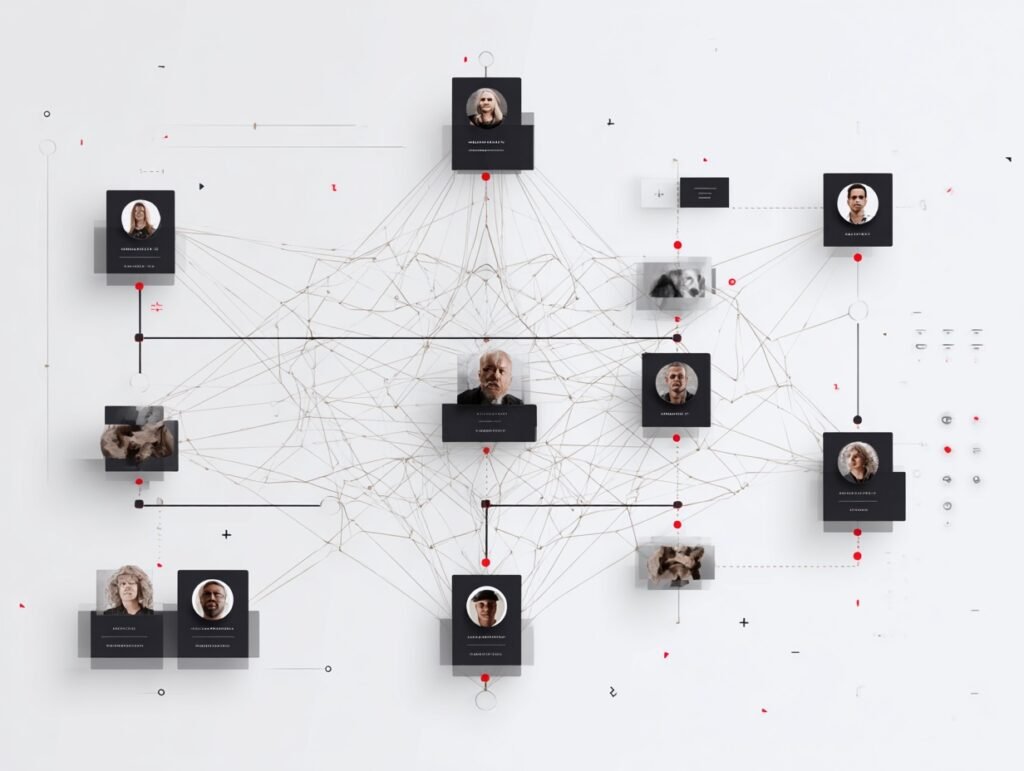

Who Should Sit on Your AI Governance Board

This is where many companies get it wrong. They either make the board too technical or too removed from the actual AI work. The sweet spot is a mix of both. You want technical experts who understand how the models work. But you also need legal counsel, ethicists, product leaders, and business unit representatives.

Research from a global survey found that AI implementation succeeds most reliably when organizational leaders actively engage with AI tools rather than delegating them entirely to technical teams (Angstrom et al., 2023). So, frontline employees and affected communities should also have a voice. That might mean creating formal advisory panels or feedback mechanisms.

Furthermore, senior leadership needs to be genuinely involved. Not just in name. Having a C-suite champion makes a huge difference in whether governance recommendations are taken seriously. The Chief AI Officer role is growing in prominence for exactly this reason. Finally, don’t forget external perspectives. Third-party auditors, academic advisors, or civil society representatives can help catch blind spots that internal teams might miss. Boards with AI expertise on them are still rare. In fact, among the top 50 U.S. companies by market capitalization, only six have directors with AI backgrounds as of early 2025, and all six are tech companies (Samara et al., 2025).

Setting Up the AI Governance Board Structure: Roles and Responsibilities

Once you know who should be on the board, it’s time to define their roles. Roles and responsibilities need to be clearly documented. Otherwise, the board becomes a talking shop with no real impact.

At a minimum, the board should review and approve high-risk AI systems before deployment. It should establish ethical guidelines that teams can reference when building new models. And it should oversee ongoing monitoring of AI systems in production. That last part is often overlooked. AI systems can drift over time. What worked well at launch may perform quite differently six months later.

Furthermore, the board needs a clear reporting structure. Who does it report to? Typically, it reports to the CEO or the board of directors. That accountability chain matters. It ensures governance isn’t overlooked when business pressures mount. Additionally, meeting cadence matters. Quarterly meetings are common, but higher-risk organizations may need monthly reviews. Without sufficient oversight, accountability gaps can emerge, and problems can go undetected before they escalate. The NIST AI RMF specifically calls for continuous monitoring and periodic review as core components of effective governance (Tabassi, 2023).

Making the AI Governance Board Structure Work in Practice

Having a board on paper is one thing. Making it work in practice is another. One of the biggest challenges is buy-in. If business units see the governance board as a blocker, they’ll find ways to work around it. So the board needs to position itself as a partner, not a gatekeeper.

Communication is key here. Regular updates to the broader organization help build credibility. Transparent decision-making processes also help. When teams understand why certain AI uses are approved or rejected, they’re more likely to engage constructively with the process.

Training matters, too. Board members need to stay current on AI developments. The technology is evolving rapidly. A member who understood the landscape two years ago may be behind today. Therefore, investing in ongoing education is part of the job. Moreover, the right tools need to support governance. The NIST Generative AI Profile, released in 2024, provides specific guidance on managing the unique risks associated with large language models and other generative systems (Autio et al., 2024). Drawing on frameworks like this can give your board a practical foundation instead of starting from scratch.

Common Pitfalls When Building Your AI Board

Even well-intentioned governance boards make mistakes. One of the most common is over-engineering the structure. Companies sometimes spend so long designing the perfect governance model that they never deploy it. Done is better than perfect. Start simple and iterate.

Another pitfall is treating governance as a one-time project. AI governance board structure needs to evolve alongside the technology and the regulatory environment. What works today may not work in two years. So build in regular reviews from the start.

Scope creep is another problem. Some boards try to govern every use case, even low-risk ones, which creates bottlenecks. Focus governance on high-impact systems first and expand gradually. Also, don’t underestimate culture; technical frameworks only go so far, so leaders must model responsible AI behavior.

Getting Started Without Overthinking It

You don’t need a perfect governance system on day one. Start small and build over time.

Begin by mapping your current AI use cases. Know what’s deployed and where. Then assess which ones carry the most risk. From there, you can identify who in your organization should oversee those systems.

After mapping use cases and risks, designate your governance champion—someone with sufficient authority and influence to ensure board recommendations are executed. This person initiates the creation of the board and sustains its success.

Draw from established frameworks—use the NIST AI RMF to organize your board’s responsibilities through the AI lifecycle. Reference the AI Governance Maturity Matrix to benchmark your current board readiness in terms of strategy, oversight, and culture. Throughout these steps, remember that a governance board demonstrates organizational maturity, supporting both transparency and compliance.

Governance doesn’t work in a vacuum. The best boards are led by executives who already understand how AI fits into broader business strategy. If your leadership team is still getting up to speed, check out our guide on AI for executive leadership and build from the top down.

References

Angstrom, R. C., Bjorn, M., Dahlander, L., Mahring, M., & Wallin, M. W. (2023). Getting AI implementation right: Insights from a global survey. California Management Review, 66(1), 5–22. https://doi.org/10.1177/00081256221141652

Autio, C., Schwartz, R., Dunietz, J., Jain, S., Stanley, M., Tabassi, E., Hall, P., & Roberts, K. (2024). Artificial Intelligence Risk Management Framework: Generative Artificial Intelligence Profile (NIST AI 600-1). National Institute of Standards and Technology. https://doi.org/10.6028/NIST.AI.600-1

Samara, G., & Abdallah, S. (2025). AI Governance Maturity Matrix: A roadmap for smarter boards. California Management Review. https://cmr.berkeley.edu/2025/05/ai-governance-maturity-matrix-a-roadmap-for-smarter-boards/

Tabassi, E. (2023). Artificial Intelligence Risk Management Framework (AI RMF 1.0) (NIST AI 100-1). National Institute of Standards and Technology. https://doi.org/10.6028/NIST.AI.100-1

Vaia, G., Bhatt, N., De Marco, M., & Rossignoli, C. (2025). Artificial intelligence in corporate boards: A dual-dimensional framework for integration across autonomy and structural levels. AI and Society. https://www.sciencedirect.com/science/article/pii/S2666764925000542