Understanding the AI Documentation Lifecycle

Managing documentation for AI systems is more complex than it sounds. The AI documentation lifecycle covers everything from early design notes to post-deployment audits. Furthermore, it spans the entire lifespan of an AI product. Many teams overlook this process entirely. However, skipping it creates serious problems down the road.

Documentation acts as a transparent, evolving record of decisions, data, and outcomes. Strong documentation improves AI outcomes, fosters transparency and accountability, and is becoming essential for regulatory compliance (Gebru et al., 2021).

Documentation is the foundational record of your AI project. Without it, audits fail, and trust disappears. Poor documentation hinders reproducibility, while early investment in structured records leads to faster iterations and reduces mistakes.

Why Documentation Gets Neglected

Many development teams treat documentation as an afterthought, rushing to ship features while deprioritizing written records. As a result, critical decisions get lost, and when team members leave, institutional knowledge disappears.

Additionally, documentation tools have often been clunky and disconnected from development workflows. Engineers face friction when switching between coding environments and documentation platforms, discouraging consistency. Over time, documentation becomes unreliable.

Beyond tools, there is a cultural dimension to this problem. In some organizations, documentation is undervalued until something goes wrong—then suddenly, everyone wishes for better records. Shifting this mindset early is essential, and leadership buy-in plays an enormous role in driving that change (Mitchell et al., 2019).

Phases of an AI Documentation Lifecycle

To address these challenges, consider the typical stages of an AI project. First comes planning and scoping, when teams should record objectives, constraints, and intended use cases. These early documents set expectations and reduce ambiguity later.

Next comes data collection. This phase requires especially careful documentation. Researchers must record the source of the data, how it was labeled, and any potential biases (Gebru et al., 2021). Without this step, downstream audits become guesswork. Poorly documented datasets also increase the risk of harmful model behavior.

Then comes model development and evaluation. Teams should document architectural decisions, hyperparameter choices, and performance benchmarks. Moreover, they should record what the model cannot do (Mitchell et al., 2019). This kind of honest scoping prevents misuse. Additionally, it builds trust with stakeholders and end users.

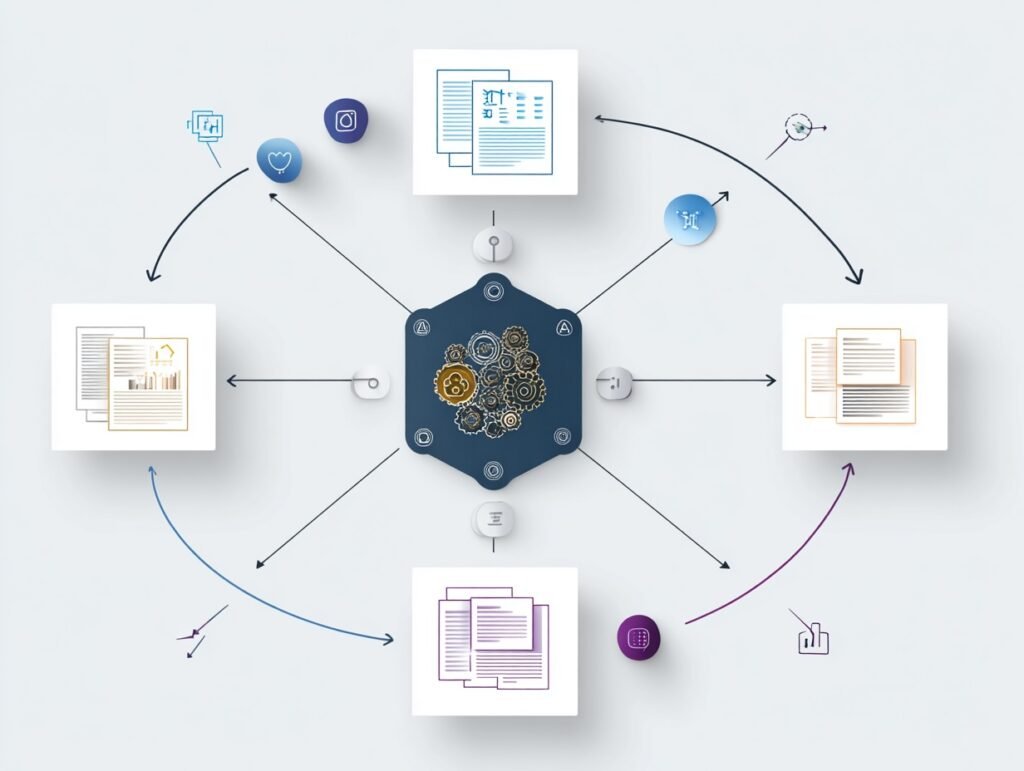

Finally, deployment documentation captures how the model was released, monitored, and eventually retired. Each phase feeds into the next. Therefore, treating these stages as interconnected is critical for a coherent documentation strategy.

Data Documentation as a Foundation

All subsequent documentation builds upon good data documentation. When teams use structured formats for recording dataset properties, they create reusable assets and foster easier collaboration across teams and organizations.

Pushkarna et al. (2022) introduced the concept of Data Cards, which are structured summaries of datasets that include provenance, intended use, and known limitations. This approach makes data decisions visible and inspectable. Consequently, teams can spot problems before they compound into something larger.

Transparency in data documentation also supports regulatory compliance. Laws like the EU AI Act require detailed records of training data. Without clear documentation, organizations face legal exposure. Moreover, they risk reputational damage when their models produce unexpected results.

Solid data documentation pays dividends at every lifecycle stage by establishing professional standards and supporting rigorous, reliable AI development.

Model Cards and Standardized Reporting

One of the most important contributions to structured AI documentation is the model card. Model cards are short documents that accompany trained machine learning models (Mitchell et al., 2019). They describe performance across different conditions, intended use cases, and ethical considerations.

This is where standardized reporting formats provide value: they reduce the cognitive burden on documentation authors. When everyone uses a shared template, nothing important gets forgotten. Furthermore, reviewers can more easily compare models across projects and time periods.

However, model cards are only useful if kept up to date. Teams should treat them as living documents, updating whenever model behavior changes significantly.

Driven by these factors, the adoption of model cards has grown quickly in recent years. Moreover, some regulators are beginning to treat them as mandatory. Organizations that establish card-maintenance routines now will be well positioned as regulatory requirements continue to expand (Bender et al., 2021).

Maintaining the AI Documentation Lifecycle Over Time

The AI documentation lifecycle does not end at deployment. Post-deployment monitoring is often neglected. Many teams document during development, then stop, leaving documentation out of sync with reality.

Maintaining documentation requires ongoing effort. Teams should schedule regular documentation reviews just as they schedule code reviews. Furthermore, model behavior changes over time due to data drift. These changes must be captured promptly and recorded accurately.

Version control for documentation is equally important. Just as code gets tracked in repositories, documentation should follow the same discipline. Liang et al. (2022) note that comprehensive, evolving evaluations are necessary for tracking model capabilities over time. This insight applies directly to documentation as well.

Additionally, teams should assign clear ownership for documentation tasks. Defined roles create accountability and ensure records remain trustworthy throughout a product’s lifecycle.

Building a Documentation Culture

Technology alone cannot solve the documentation problem facing AI teams. Culture matters just as much. Teams that celebrate thorough documentation—especially those comprised of engineers, data scientists, and managers—tend to produce more trustworthy AI systems. Furthermore, they experience fewer surprises during audits and handoffs.

Building this culture starts with leadership. When senior engineers and product managers treat documentation as valuable work, junior team members naturally follow their lead. Consequently, good habits spread through the organization over time.

Training also plays a meaningful role. Many developers were never taught documentation best practices during their education. Short internal workshops can make a significant difference. Moreover, integrating documentation checkpoints into project timelines makes them harder to skip or postpone.

Recognition helps too. When teams receive acknowledgment for excellent documentation, motivation increases across the board. Over time, documentation stops feeling like a chore. Instead, it becomes a point of professional pride. This cultural shift ultimately sustains the AI documentation lifecycle across entire organizations and across multiple product generations.

Looking Ahead

Looking ahead, AI documentation practices are evolving rapidly. Automated documentation tools are emerging that can capture decisions directly from development pipelines. Furthermore, large language models are beginning to assist with drafting and summarizing technical records, reducing friction considerably for busy teams.

Nevertheless, human judgment remains irreplaceable. While tools can capture what happened, only people can explain why decisions were made and what trade-offs were considered. As such, automation should support documentation, not replace the thinking behind it.

Regulatory pressure will also continue to grow. The EU AI Act and similar frameworks in other regions are making detailed documentation a legal requirement rather than a best practice. Organizations that build robust documentation processes now will have a clear advantage. Moreover, they will be better positioned to earn the trust of users, regulators, and partners alike.

Strong documentation discipline is essential for responsible AI. Now is the time to build this foundation for lasting trust and compliance.

References

Bender, E. M., Gebru, T., McMillan-Major, A., & Shmitchell, S. (2021). On the dangers of stochastic parrots: Can language models be too big? Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency, 610–623. https://doi.org/10.1145/3442188.3445922

Gebru, T., Morgenstern, J., Vecchione, B., Vaughan, J. W., Wallach, H., Daumé III, H., & Crawford, K. (2021). Datasheets for datasets. Communications of the ACM, 64(12), 86–92. https://doi.org/10.1145/3458723

Liang, P., Bommasani, R., Lee, T., Tsipras, D., Soylu, D., Yasunaga, M., Zhang, Y., Narayanan, D., Wu, Y., Kumar, A., Newman, B., Yuan, B., Yan, B., Zhang, C., Cosgrove, C., Manning, C. D., Ré, C., Acosta-Navas, D., Hudson, D. A., & Koreeda, Y. (2022). Holistic evaluation of language models. arXiv. https://arxiv.org/abs/2211.09110

Mitchell, M., Wu, S., Zaldivar, A., Barnes, P., Vasserman, L., Hutchinson, B., Spitzer, E., Raji, I. D., & Gebru, T. (2019). Model cards for model reporting. Proceedings of the Conference on Fairness, Accountability, and Transparency, 220–229. https://doi.org/10.1145/3287560.3287596

Pushkarna, M., Zaldivar, A., & Kjartansson, O. (2022). Data cards: Purposeful and transparent dataset documentation for responsible AI. Proceedings of the 2022 ACM Conference on Fairness, Accountability, and Transparency, 1776–1826. https://doi.org/10.1145/3531146.3533231