How AI Is Reshaping the Modern Data Science Process

Artificial intelligence is reshaping the modern data science workflow. Not by eliminating the lifecycle that practitioners have relied on for years, and not by replacing professional judgment, but by accelerating specific stages within that lifecycle.

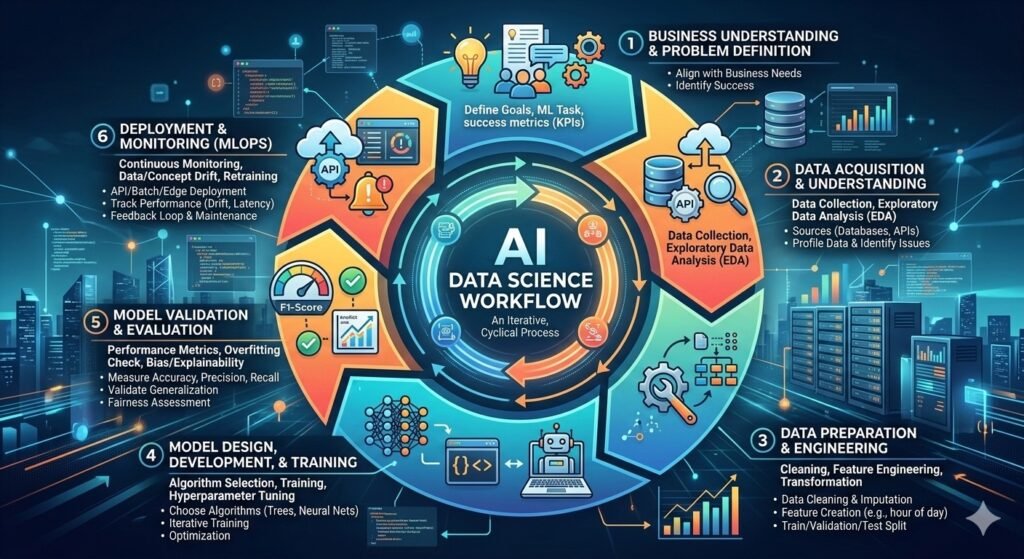

The structure itself remains grounded in established frameworks such as CRISP-DM, which defines the process as business understanding, data understanding, data preparation, modeling, evaluation, and deployment (Chapman et al., 2000; Wirth & Hipp, 2000). What has changed is how AI tools now integrate into those stages, reducing friction and increasing iteration speed.

To understand optimization in 2026, we need to walk through the workflow stage by stage.

Stage 1: Business Understanding and Problem Definition

Every strong AI data science workflow begins with business understanding. CRISP-DM explicitly identifies this as the first phase of any successful project (Chapman et al., 2000). At this stage, organizations define objectives, constraints, and measurable success criteria before technical modeling begins.

AI systems can assist in summarizing stakeholder discussions, drafting documentation, or helping clarify performance metrics. However, translating business needs into a structured analytical problem remains a human responsibility. Determining trade-offs, acceptable risk, compliance constraints, and long-term impact cannot be delegated to automation.

The workflow still starts with strategic clarity.

Stage 2: Data Acquisition and Understanding

Once the objective is defined, attention turns to acquiring and understanding data. This phase involves identifying relevant sources, retrieving datasets, examining structure, and assessing quality.

CRISP-DM describes data understanding as an iterative stage in which early exploration often reveals gaps, inconsistencies, or new questions (Wirth & Hipp, 2000). In modern environments, AI tools can accelerate exploratory analysis by generating summaries, identifying anomalies, and assisting with rapid querying.

Even so, determining relevance, legality, representativeness, and potential bias requires domain expertise. Automation can surface patterns, but interpretation remains contextual.

Stage 3: Data Preparation and Engineering

Data preparation continues to be one of the most time-intensive aspects of the workflow. Industry research has long reported that data scientists spend a significant portion of their time cleaning and organizing data (CrowdFlower, 2016).

Raw datasets frequently contain missing values, inconsistent formats, and noisy signals. Transformations, feature engineering, normalization, and encoding remain essential steps before modeling can begin.

AI tools now assist by generating transformation scripts, suggesting feature combinations, and flagging potential anomalies. These capabilities reduce repetitive manual effort. However, improper preprocessing can introduce leakage or bias, and therefore human oversight remains critical.

AI accelerates preparation. It does not remove accountability.

Stage 4: Model Design, Development, and Training

Model development traditionally involves selecting algorithms, training models, comparing performance, and tuning hyperparameters.

Automated machine learning systems, often referred to as AutoML, can partially automate model selection and hyperparameter optimization (Hutter et al., 2019). These systems improve experimentation speed and expand the search space of possible configurations.

However, choices about evaluation metrics, fairness constraints, interpretability requirements, and deployment context remain strategic decisions. Automation assists in exploration, but practitioners determine which models align with organizational goals.

Stage 5: Model Validation and Evaluation

Evaluation remains a critical safeguard in the AI data science workflow. Performance must be measured carefully to avoid overfitting and unintended bias.

Foundational machine learning literature emphasizes the importance of rigorous validation procedures and careful interpretation of results (Goodfellow, Bengio, & Courville, 2016). Cross-validation, calibration checks, and fairness assessments remain necessary components of responsible development.

AI systems can help generate evaluation scripts and highlight unusual output patterns. Still, determining whether a model is reliable, ethical, and operationally sound requires human judgment.

Stage 6: Deployment and Monitoring

Deployment is no longer the final step. Modern AI data science workflows incorporate MLOps principles that emphasize monitoring and lifecycle management.

Research on production machine learning systems has demonstrated how hidden technical debt can accumulate when deployed models are not actively maintained (Sculley et al., 2015). Drift detection, retraining schedules, version control, and governance frameworks are essential for long-term stability.

AI tools can assist with log analysis and performance tracking. However, stewardship, compliance, and accountability remain organizational responsibilities.

The Iterative Nature of the AI Data Science Workflow

The AI data science workflow is cyclical rather than linear. CRISP-DM was designed with feedback loops between evaluation and earlier stages (Wirth & Hipp, 2000). Insights from validation often require returning to feature engineering or even business understanding.

AI accelerates this cycle by reducing the time required to test and refine ideas. Faster experimentation strengthens iteration rather than replacing it.

Where AI Adds the Most Value

Research on generative AI in professional settings suggests that these systems augment knowledge work by improving productivity rather than replacing expertise outright (Brynjolfsson, Li, & Raymond, 2023).

Within data science, augmentation appears in reduced scripting time, faster exploratory analysis, and accelerated experimentation cycles. Documentation and debugging tasks can also be streamlined.

Yet strategic reasoning, contextual awareness, and ethical oversight remain fundamentally human.

The Optimized Workflow for 2026

The optimized AI data science workflow in 2026 retains the same foundational lifecycle defined decades ago. Business understanding anchors the process. Data acquisition and preparation demand rigor. Modeling benefits from automation but still requires oversight. Evaluation remains non-negotiable. Deployment requires governance. Iteration remains central.

The structure persists. The tools evolve.

Now put the workflow to work.

Ten prompts. Ready to copy and paste.

You just read how AI fits into every stage of the data science lifecycle. This free guide gives you the exact prompts to use at each one — from exploratory analysis all the way to the executive summary.

- 10 field-tested prompts built for working data scientists

- Real case studies showing outcomes at each workflow stage

- Easy to customize for your data, your models, your team

Continue Reading

The Complete 2026 Guide to AI for Data Scientists

The workflow is one piece of the picture. The full guide covers skills, MLOps, governance, portfolio strategy, and how to interview for roles where AI is already part of the job.

Inside the guide: AI-assisted workflows · High-value skills for 2026 · MLOps and production readiness · Safety and governance · Portfolio and interview strategy

References

Brynjolfsson, E., Li, D., & Raymond, L. R. (2023). Generative AI at work. National Bureau of Economic Research Working Paper No. 31161. https://www.nber.org/papers/w31161

Chapman, P., Clinton, J., Kerber, R., Khabaza, T., Reinartz, T., Shearer, C., & Wirth, R. (2000). CRISP-DM 1.0: Step-by-step data mining guide. SPSS Inc. https://www.the-modeling-agency.com/crisp-dm.pdf

CrowdFlower. (2016). The data scientist report. https://visit.figure-eight.com/rs/416-ZBE-142/images/CrowdFlower_DataScienceReport_2016.pdf

Goodfellow, I., Bengio, Y., & Courville, A. (2016). Deep learning. MIT Press. https://www.deeplearningbook.org/

Hutter, F., Kotthoff, L., & Vanschoren, J. (Eds.). (2019). Automated machine learning: Methods, systems, challenges. Springer. https://doi.org/10.1007/978-3-030-05318-5

Sculley, D., Holt, G., Golovin, D., Davydov, E., Phillips, T., Ebner, D., & Young, M. (2015). Hidden technical debt in machine learning systems. Advances in Neural Information Processing Systems. https://papers.nips.cc/paper/5656-hidden-technical-debt-in-machine-learning-systems.pdf

Wirth, R., & Hipp, J. (2000). CRISP-DM: Towards a standard process model for data mining. Proceedings of the 4th International Conference on the Practical Applications of Knowledge Discovery and Data Mining. https://www.researchgate.net/publication/228749685